6.7: Integrated Services

In the previous

sections, we identified both the principles and the mechanisms used to

provide quality of service in the Internet. In this section, we consider

how these ideas are exploited in a particular architecture for providing

quality of service in the Internet--the so-called Intserv (Integrated Services)

Internet architecture. Intserv is a framework developed within the IETF

to provide individualized quality-of-service guarantees to individual application

sessions. Two key features lie at the heart of Intserv architecture:

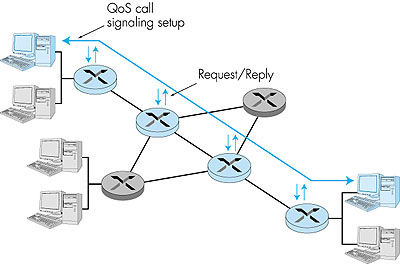

Let us now consider the steps involved in call admission in more detail:

Figure 6.32: Per-element call behavior The Intserv architecture defines two major classes of service: guaranteed service and controlled-load service. We will see shortly that each provides a very different form of a quality of service guarantee. 6.7.1: Guaranteed Quality of ServiceThe guaranteed service specification, defined in RFC 2212, provides firm (mathematically provable) bounds on the queuing delays that a packet will experience in a router. While the details behind guaranteed service are rather complicated, the basic idea is really quite simple. To a first approximation, a source's traffic characterization is given by a leaky bucket (see Section 6.6) with parameters (r,b) and the requested service is characterized by a transmission rate, R, at which packets will be transmitted. In essence, a session requesting guaranteed service is requiring that the bits in its packet be guaranteed a forwarding rate of R bits/sec. Given that traffic is specified using a leaky bucket characterization, and a guaranteed rate of R is being requested, it is also possible to bound the maximum queuing delay at the router. Recall that with a leaky bucket traffic characterization, the amount of traffic (in bits) generated over any interval of length t is bounded by rt + b. Recall also from Section 6.6, that when a leaky bucket source is fed into a queue that guarantees that queued traffic will be serviced at least at a rate of R bits per second, the maximum queuing delay experienced by any packet will be bounded by b/R, as long as R is greater than r. The actual delay bound guaranteed under the guaranteed service definition is slightly more complicated, due to packetization effects (the simple b/R bound assumes that data is in the form of a fluid-like flow rather than discrete packets), the fact that the traffic arrival process is subject to the peak rate limitation of the input link (the simple b/R bound assumes that a burst of b bits can arrive in zero time), and possible additional variations in a packet's transmission time.6.7.2: Controlled-Load Network ServiceA session receiving controlled-load service will receive "a quality of service closely approximating the QoS that same flow would receive from an unloaded network element" [RFC 2211]. In other words, the session may assume that a "very high percentage" of its packets will successfully pass through the router without being dropped and will experience a queuing delay in the router that is close to zero. Interestingly, controlled load service makes no quantitative guarantees about performance--it does not specify what constitutes a "very high percentage" of packets nor what quality of service closely approximates that of an unloaded network element.The controlled-load service targets real-time multimedia applications that have been developed for today's Internet. As we have seen, these applications perform quite well when the network is unloaded, but rapidly degrade in performance as the network becomes more loaded. |